2023 EDIT: While this post was mostly about how to migrate from Crashplan to a proper backup, it's still very relevant on how to do 3-2-1 backups.

I always had the feeling I was doing backups wrong. I’ve done them since I can remember, but I could never shake the “there has to be a better way”.

From early on, and we’re talking nineties, I mashed a home-brewed set of scripts that did the job. It then evolved to an intricate solution involving downtime and Clonezilla, and eventually to the ever-present rsync where I tried to hammer life into a differential backup. That is, up until 2010 when I smashed head-first into Crashplan and started doing three-tier backups.

Crashplan obviously allowed to back up to Code42’s Cloud, but it excelled in saving data to multiple zones.

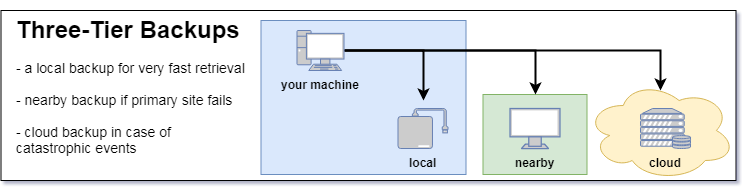

3-2-1 backups are the recommended form of backup by the US-CERT Team, and they excel at several levels:

- Local Backup – fastest to restore from but the first to disappear if instead of a broken hard drive you experience theft or a fire. I know a couple of people and at least one business who only had local backups and were left empty-handed after robberies.

- Nearby Backup – a little slower as you’ll have to drive somewhere but fast enough to go live on the same day, prone to area-wide disasters like floods. I don’t know a single person who experienced both local and nearby backup failure, but it may happen and if it does…

- Cloud Backup – the safest of them all, also the slowest; depending on the amount of information, it may take a long time to download the full set of data (I’ve witnessed a friend recover taking more than 9 days). Nothing feels safer, though, than knowing your data is a thousand miles away, stored inside a former nuclear bunker, by a team of dedicated experts.

Crashplan, against what the majority of the industry was doing in 2010, was unparalleled at this 3-2-1 backup with just a little configuration.

While you could only backup to their cloud, it offered a persuasive product in which you could send your data mostly everywhere – another drive in your computer, a friend’s machine across town or your own server on the other side of the Atlantic.

Cloud-wise, for obvious reasons, it was a walled-garden product; there was no compelling reason for Code42 to allow you to use the competition.

Until I had to re-evaluate my options…

And it’s fortunate that sometimes you’re forced to take the other side of the pathway. You see, 9 years ago Stefan Reitshamer started what is now known as Arq Backup, and it’s fundamentally the tool we have been waiting for. It’s got everything on the alternatives, with the fundamental difference of freeing you from a specific cloud.

Your software. Your data. Your choice of cloud.

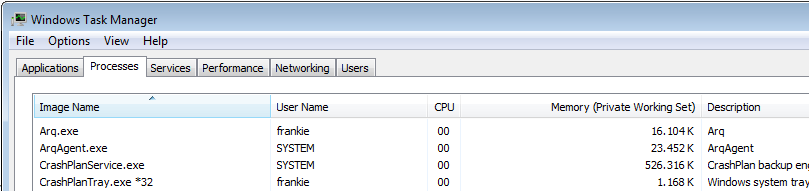

Arq Backup gets all the fundamentals correct. What a nice surprise it was when I dug up resource usage:

The values from above are idle. During backups Arq will go up to 100MB and take about 5% CPU where Crashplan went all the way up to 800MB and eat about 8%. CPU values will obviously differ depending on your processor.

What about price?

Crashplan for unlimited data charged $9/month.

Arq Backup has a single fee of $50 and then it depends. It’s your choice, really.

Prices for Q3 2018:

| Provider | $/GB/month (2018) | $/GB/month (2023) | GB to reach Crashplan’s $9 (2018) |

|---|---|---|---|

| Amazon S3 | $0.021 | $ 0.023 | 428 GB |

| Backblaze B2 | $0.005 | $ 0.005 | 1800 GB |

| Google Nearline | $0.010 | $ 0.010 | 900 GB |

| Google Coldline | $0.007 | $ 0.004 | 1285 GB |

| Microsoft Azure | $0.018 | $ 0.018 | 500 GB |

Your mileage will vary, but my 200GB of digital trash are currently costing me $1/month on Backblaze B2.

Should I also go with Backblaze’s backup client? Definitely not!

While I do appreciate what Backblaze has been doing from the very start, their blog is mandatory, switching clouds using Arq is as simple as pointing to another location.

For me, the cost of Arq will offset itself in 6 months. But it’s not even that what compels me to use it, it’s the freedom you gain. It’s knowing that if Amazon goes bananas and drops price to $0.001 (actually most providers went up, as you can see by the updated table), you can switch clouds with a simple click, keeping the backup client you’re already familiar with. No alternate setup. No extra testing.

Don’t just take my word for it:

- Arq Backup allows you to trial for a full month

- Most clouds give you some data free, Backblaze B2 gives you 10 GB just to test

This post is not sponsored, I put my money on software I believe in.

Edit:

Mike from PhotoKaz brought to attention the price difference between B2 and BackBlaze when the amount of data starts pilling.

I have 5TB of data stored at Backblaze, if I put that on their B2 service it would cost >$300/year instead of $60. I’ll pass.

BackBlaze regular gives you unlimited data. Storage has a direct physical correlation to drives, thus the idea of it being inexhaustible doesn’t scale. Like BackBlaze says; "BitCasa, Dell DataSafe, Xdrive, Mozy, Amazon, Microsoft and Crashplan all tried" but eventually discontinued unlimited plans.

The only two still in the game are BackBlaze and Cabonite which can only offer it by offsetting the returns of the majority with that of the few that effectively overcost.

As a final customer, you should go with the deal that best fits you.

As such, the cost-effective sweet-spot is everything below 1TB.

| Size | BackBlaze | B2 |

|---|---|---|

| 100GB | $60 | $6 |

| 500GB | $60 | $30 |

| 1TB | $60 | $60 |

| 5TB | $60 | $300 |

But I like Arq Backups and their inherent flexibility so much that if I’d ever find myself on the 5TB+ tier, I’d probably still use Arq client and set up a NAS on a remote location. Backups are completely encrypted, and I’d keep the flexibility of choice. Mind you that an 8TB NAS goes for $400 so BackBlaze home, cost-effective, is probably still a better choice.

Thanks for the input, Mike!

Linux?

You should probably check Timeshift. But one of my favourite things about Linux is being able to do something like this one-liner:

dd bs=1M if=/dev/sda | \

pv -c | \

gzip | \

ssh -i ~/.ssh/remote.key [email protected] \

"split -b 2048m -d \

- /cygdrive/d/backup-`hostname -s`.img.gz"Breaking everything apart:

dd bs=1M if=/dev/sda copies and converts (called dd because cc was already in use by the C compiler) the whole device on sda with a block size of 1M

pv -cmonitors the progress of data through a pipe. We're also resorting to cursor positioning escape sequences

gzip compresses the transfer, but if it finds a large area of the disk empty (just zeros or ones) the speedup is just surreal.

ssh secure shell into 192.168.1.5 with user "frankie" using the private key "remote.key" and making sure output file is stored as "hostname" in it's short "-s" version.

split splits the whole disk in chunks of 2BG "-b" using numerical suffixes "-d"

When this command finishes, you have a bit by bit copy of a hard drive. It's a bit like magic.

Whatever system you're using... just do your backups!

As an Amazon Associate I may earn from qualifying purchases on some links.

If you found this page helpful, please share.